It is the traditional period in which people make resolutions and predictions for the next year. I have always followed the path of least resistance and succumbed to the general view that I need to do the same, despite having little confidence that my predictions were anything more than guesses. Therefore, it is with much relief that I can report that after reading Nate Silver’s book “The Signal and The Noise: Why So Many Predictions Fail – But Some Don’t” that I was correct. But, not only have my predictions been little more than best guesses, so have everyone else’s too.

So, instead of making you read my less than accurate predictions for 2013, I am simply going to wish you happy tidings for 2013.

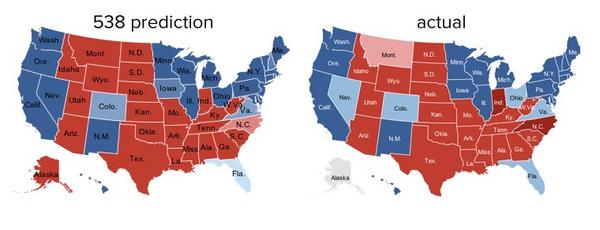

Silver leapt to prominence principally though his predictions of the past two US presidential elections in his 538 blog in the New York Times. There was much debate during the elections about the efficacy of Silver’s predictions, but they were pretty accurate. While Silver hasn’t paid any attention to demand planning, there are still some really valid lessons we can draw from his work. Chief amongst these is that Silver finds that predictions tend to go bad because of biases, vested interests, and overconfidence. Images that immediately spring to mind are of a Sales & Operations Planning meeting to discuss the launch of some new product. Perhaps the part of the book that struck a chord with me the most is early on when Silver is discussing the difference between uncertainty and risk. His assertion, with which I agree, is that we tend to confuse the two and therefore focus on the noise rather than the signal. Risk, as first articulated by the economist Frank H. Knight in 1921, is something that you can put a price on. Say that you’ll win a poker hand unless your opponent draws to an inside straight: the chances of that happening are exactly 1 chance in 11. This is risk. It is not pleasant when you take a “bad beat” in poker, but at least you know the odds of it and can account for it ahead of time. In the long run, you’ll make a profit from your opponents making desperate draws with insufficient odds. Uncertainty, on the other hand, is risk that is hard to measure. You might have some vague awareness of the demons lurking out there. You might even be acutely concerned about them. But you have no real idea how many of them there are or when they might strike. Your back-of-the-envelope estimate might be off by a factor of 100 or by a factor of 1,000; there is no good way to know. This is uncertainty. Risk greases the wheels of a free-market economy; uncertainty grinds them to a halt. I must admit that not being an economist I had to scratch my head a little at his explanation. One that is easier for me to understand in a supply chain context is inventory levels, especially of finished goods. We keep safety stock because we are uncertain of the demand and don’t want to take the risk of missing an order because we don’t have any available inventory. (Of course we also keep cycle stock, which is the balancing of demand cycles, which tend to be more frequent, and supply cycles, which tend to be slower.) What is important in Silver’s description is that risk is quantifiable. Unfortunately in my example the risk is not quantifiable. However there are approximate equations that use historical demand and the likelihood of being out of stock when an order arrives to determine the ideal safety stock levels. In this case the likelihood of being out of stock is a proxy for risk. The problem I have with these equations are that they do not include the likelihood of never being able to sell the inventory because the demand has disappeared. In other words they do not measure the risk ofexcess and obsolete inventory.

At the heart of Silver’s argument is that we should be using Bayesian approach to prior probability, and contrasts this with the practices of “frequentism”, the statistical approach we’re most familiar with in supply chain. The basis of “frequentism” is to analyze how frequently something has occurred in the past and assume that it will occur with the same frequency in the future. In contrast, Bayesian models slowly whittle away wrongness and estimate how right we believe we are. They force us to ask how probable we believe something to be before collecting new evidence and revising our level of uncertainty. In supply chain management we all too frequently assume that we know something with certainty, especially demand. In truth, there is a lot we do not know with certainty including such things are available capacity and throughput rates. Silver analyzes fascinating examples ranging across all the areas in which we try to predict future outcomes, and stresses the gap between what we claim to know and what we actually know, which is at the heart of Bayesian analysis. For example, in 1997, the US National Weather Service predicted that heavy winter snows would cause North Dakota’s Red River to flood over its banks in two months, cresting at 49 feet. The residents of Grand Forks were relieved, since their levees were designed to withstand a 51-foot crest. If the Weather Service had mentioned that the margin of error for its forecast was five feet, the three feet of water that poured over the levels in an eventual 54-foot crest might not have destroyed 75 percent of the town. I don’t think we need to think very hard about how this applies to our demand forecast in supply chain management, but it is a long time since I saw a statement of probability associated with a forecast, or even better yet, a range forecast. This is the essence of Silver’s point. If the supply chain has some estimate of how confident people are of the forecast they can operate the supply chain appropriately. A high degree of uncertainty should be balanced with a high degree of risk mitigation. The essence of Bayesian analysis is to provide an approach by which you change your existing beliefs in the light of new evidence. In other words, it allows people to combine new data with their existing knowledge or expertise on a continuous basis. An illustrative example is to imagine that a very precocious newborn observes her first sunset, and wonders whether the sun will rise again or not. She then assigns equal prior probabilities to both possible outcomes, and does this by placing one white and one black marble into a bag. The following day, when the sun rises, the child places another white marble in the bag. The probability that a marble plucked randomly from the bag will be white (in other words, the child's degree of belief in future sunrises) has thus gone from a half to two-thirds. After sunrise the next day, the child adds another white marble, and the probability (and thus the degree of belief) goes from two-thirds to three-quarters. And so on. Gradually, the initial belief that the sun is just as likely as not to rise each morning is modified to become a near-certainty that the sun will always rise. While we tend to focus on demand in these types of analysis, we should use the same approach for supply. Even if the initial supply plan is developed to satisfy the demand plan, we all know that as time progresses we will know with greater certainty what our true supply will be. I have always promoted the concept of Plan-Monitor-Respond, but it was only in reading Nate Silver’s book that I realized that it is, at its heart, a Bayesian approach. All too often we talk about demand or supply changing, when in fact we simply did not have a good enough estimate in the first place. Of course at times it does change, but the truth of the matter is that we assumed that our initial estimate of demand and supply were accurate, and now they have to be revised in light of new evidence. In conclusion, let me admit to being uncertain about what 2013 holds for all of us in supply chain management and that I do not want to take the (almost certain) risk of being wrong. So instead I will simply wish you all the very best for 2013, and trust that you will revise your approach to supply chain management based upon the evidence that past approaches have been less than ideal.

Leave a Reply