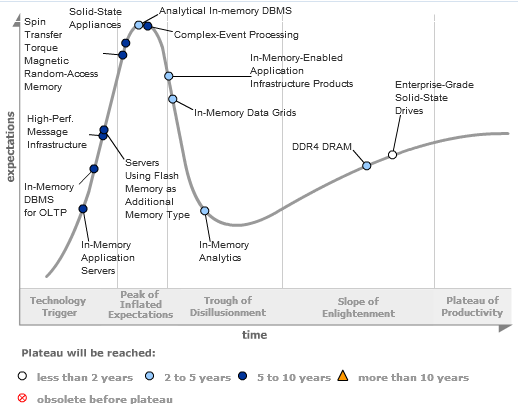

With good reason there is a lot of focus in the overall IT community on in-memory computing, including the usual Gartner Hype Cycle (subscription required). There is no doubt that over time, in-memory computing and supply chain planning will result in improvements in the scope and scale of traditional on-disk applications, such as Business Intelligence (BI) and transaction systems such as ERP. But this will require a rewrite of many systems from the ground up, which is going to take time. There is no doubt that BI has seen an immediate impact.

Hype Cycle for In-Memory Computing Technology

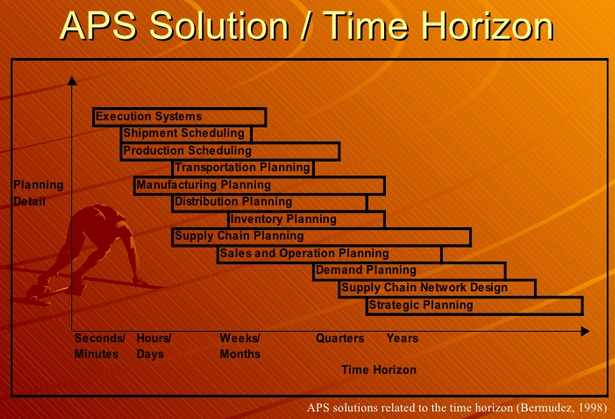

More importantly, the key question within the supply chain community is ‘so what?’ All the Advanced Planning and Scheduling (APS) solutions that emerged in the 1990s are based on in-memory technology, for the most part. At the time, memory was expensive while disk space was relatively cheap. Plus, there were physical limitations to the amount of memory in a computer, with 32-bit machines only able to address 4GB of memory. Given the clock speed and architecture of CPUs at the time, the general approach to supply chain planning was ‘divide and conquer’ along traditional functional boundaries. Solutions were developed for different functions: Sales, Distribution, Manufacturing, Purchasing, Warehousing, Transportation… and for different time horizons: short, medium and long.

Advanced Planning and Scheduling Systems Solutions Related to the Time Horizon

Nevertheless, the applications developed were in-memory. In addition, they used optimization techniques such as Linear Programming and Genetic Algorithms to try to generate better solutions faster. This was the core value propositions offered by the likes of i2 Technologies and Manugistics in the mid-1990s. I joined i2 in 1995 when they still had only one solution, Factory Planner. Shortly afterwards they developed Supply Chain Planner, and then acquired a number of companies with niche solutions for Demand Planning, Transportation Planning, and Scheduling/Sequencing. Manugistics followed a very similar acquisition trajectory. By the late 1990s, when the revenues for both i2 and Manugistics exceeded $200M, the likes of SAP and Oracle began to develop (SAP) or acquire (Oracle) APS solutions. SAP’s Advanced Planning and Optimization suite (APO), was introduced in 1998, at the height of the APS boom, after a period of collaboration with i2 when SAP sold i2’s Factory Planner as SAP PP/DS (Production Planning & Detailed Scheduling). The core concept used by all vendors at this time was that in-memory computing was required to get the speed needed to analyze the part of the supply chain for which the application was designed. Given the hardware and memory limitation at the time, often these were 3-tier architectures if only because it was not possible to get enough memory to contain the whole model. As a consequence, beyond the sheer processing speed available in today’s computers and the larger models that can fit in memory, the hype of in-memory computing will have little impact on supply chain planning solutions. That is until the solutions are rewritten so that they do not address a narrow functional need. At the moment, the modular structure of APS solutions is the greatest barrier to improvements in decision latency and decision quality. Placing in-memory databases underneath fragmented architectures isn’t going to change this fact.

Do the Business Users Really Care?

Well, not really. That is, they do not care how the solutions are constructed. But they do care about how effective the organization is in solving their supply chain planning and response needs. As a recent report by Josh Greenbaum of Enterprise Applications Consulting points out:

An analysis of the factors that influence customer satisfaction in the Kinaxis customer base shows a clear conclusion. In-memory technology is not a primary factor in the relatively high degree of customer satisfaction that Kinaxis enjoys. In fact, while raw processing power is clearly an important part of how RapidResponse is used, speed is essentially an enabling technology that supports other reasons for using RapidResponse.

Josh Greenbaum goes on that state that:

...the fact is that RapidResponse has been about much more than in-memory computing for some time. Raw speed is important, but as Shellie Molina from First Solar puts it, “I am looking for a product that is fast and easy to use. How Kinaxis does it? I’m indifferent.” Sometimes a little indifference goes a long way.

At the end of the day there are many other aspects that affect the overall speed with which decisions are made than raw computing power. Every executive wants to know sooner about risk or opportunity, and they want a people, process, and technology that allows them to act faster. Solutions that continue to address the specific needs of individual functions rather than focus on the concurrent nature of end-to-end supply chain planning do not address the core issues faced by most executives today. Rapid trade-off across functional boundaries based upon what-if analysis is a core technical requirement to enable concurrent planning processes. To do this you need a lot more than an application that can crunch numbers quickly.

Discussions

Leave a Reply